You’ve built something people want. Users are signing up, usage is rising, and your product is gaining traction. But with growth comes pressure, right? On your servers, your codebase, and your team.

What used to be smooth and predictable can quickly feel unstable without the right systems in place, especially for Software as a Service applications, where stability and responsiveness are everything.

And this isn’t just your product’s story. It’s part of a much larger movement. According to Fortune Business Insights The global SaaS market is booming, expected to grow from USD 315.68 billion in 2025 to over USD 1,131.52 billion by 2032. That level of growth means more users, more expectations, and higher stakes for how your application performs at scale.

When a Software as a Service application begins to grow, the focus quickly shifts. What once worked perfectly for a small user base may start showing delays, instability, or rising operational costs. Teams that were once focused on building features are now firefighting slowdowns, outages, and infrastructure issues.

Scalability challenges in SaaS applications aren’t always about size. Sometimes, it’s about how ready your system is to evolve. As demand increases, earlier decisions about architecture and infrastructure start carrying more weight. Without the right foundation, even well-built products can start to lag behind.

In this blog, you’ll find a structured take on building scalable SaaS products. From architecture best practices to smarter cloud infrastructure planning, each section focuses on keeping your application ready for consistent growth without sacrificing speed or reliability.

Why scalability is critical for SaaS applications

Before diving into best practices, let's first grasp the concept of scalability in SaaS applications. There are two primary approaches to scalability: vertical scalability, which involves adding more resources to a single server (e.g., CPU, RAM), and horizontal scalability, which involves adding more servers to distribute the load.

The blog will delve into the reasons why scalability is crucial and the direct impact it has on user experience.

Architectural best practices

In the ever-evolving landscape of technology and architecture, staying updated with best practices is essential. Architectural decisions can greatly impact the scalability, performance, and maintainability of any system.

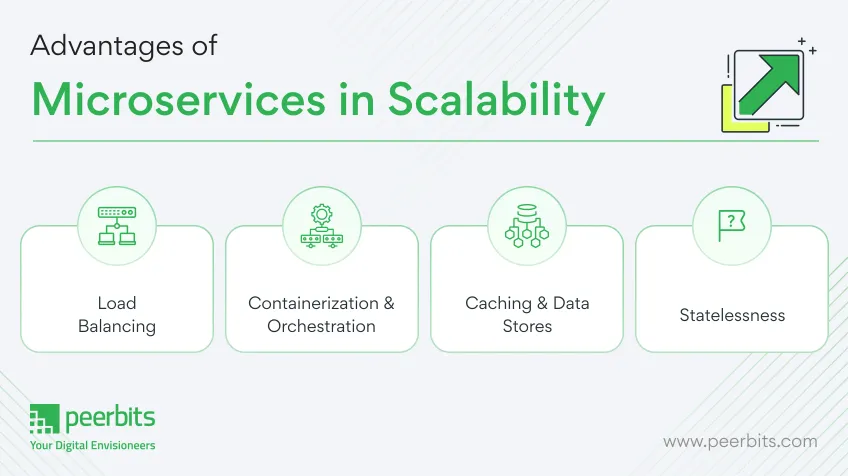

We will use some of the core architectural best practices, with a focus on Microservices Architecture, Load Balancing, Containerization, Caching, and Statelessness.

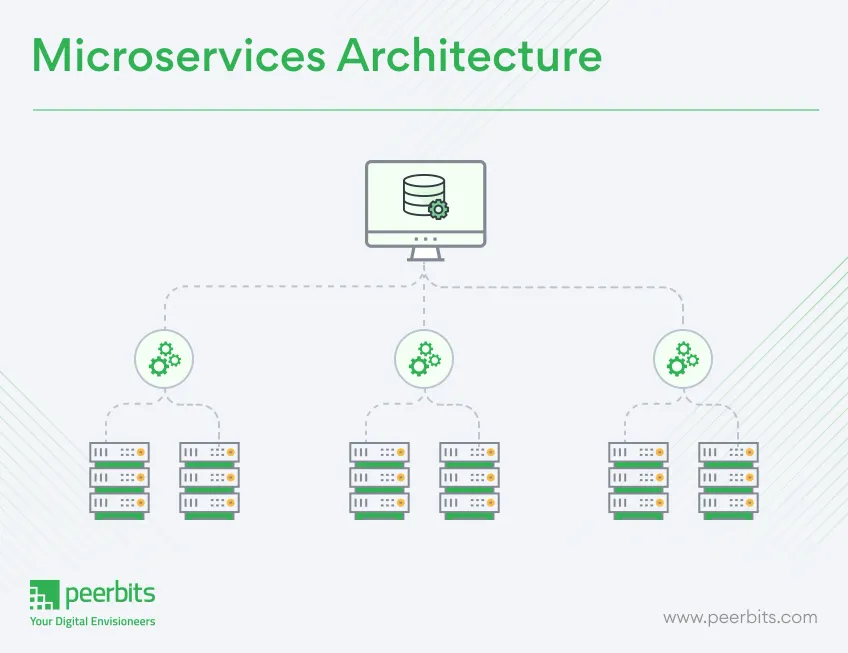

Microservices Architecture

Microservices architecture is a design approach that structures an application as a collection of loosely coupled, small, and independently deployable services.

Each service serves a specific business function and communicates with others through APIs. This architectural style promotes agility, scalability, and fault tolerance.

Benefits of microservices in scalability

Microservices offer several advantages, with scalability being a prominent one. By breaking an application into smaller services, it becomes easier to scale individual components based on their demand. This fine-grained control over scalability ensures efficient resource utilization.

Load balancing

- Role of load balancers

Load balancers are critical components in distributing incoming network traffic across multiple servers. Their role is to ensure high availability, improve response times, and prevent overloading of any single server. Load balancing is essential for maintaining a responsive and fault-tolerant system.

- Load balancing algorithms

Load balancers use different algorithms to distribute traffic. Common algorithms include Round Robin, Least Connections, and IP Hash. Each algorithm has its own strengths and weaknesses, making it crucial to choose the right one based on your specific use case and requirements.

Containerization and orchestration

- Containers and Docker

Containerization, particularly with Docker, has revolutionized application deployment. Containers encapsulate an application and its dependencies, making it easy to deploy consistently across different environments. Docker simplifies the packaging and distribution of applications, enhancing portability.

- Kubernetes for Orchestration

Kubernetes, often referred to as K8s, is a popular orchestration platform for containerized applications. It automates deployment, scaling, and management of containerized workloads. Kubernetes simplifies the complexities of managing containers at scale, enabling robust and reliable deployments.

Caching and data stores

- Caching for performance

Caching is a technique used to store frequently accessed data in a quickly retrievable location. It significantly improves application performance by reducing the need to fetch data from slower storage, such as databases. Caching mechanisms like Redis or Memcached are widely used in various applications.

- Choosing the Right Data Stores

Selecting the appropriate data store is a crucial architectural decision. The choice between relational databases, NoSQL databases, or hybrid solutions depends on factors like data structure, scalability, and consistency requirements. Understanding the trade-offs is essential for making informed decisions.

Statelessness

- Stateless vs. stateful applications

Statelessness is a concept that impacts how applications handle and manage user data. Stateless applications do not store user-specific data between requests, making them more scalable and fault-tolerant. In contrast, stateful applications retain user data, which can lead to complexity and potential bottlenecks.

- State management strategies

Architects need to consider how an application manages state. State management can be handled on the client-side (e.g., with cookies or local storage) or server-side (e.g., using sessions or databases). The choice depends on the application's needs and requirements, with statelessness often favored for its advantages.

Read More: Discover what to consider in microservices architecture for SaaS applications

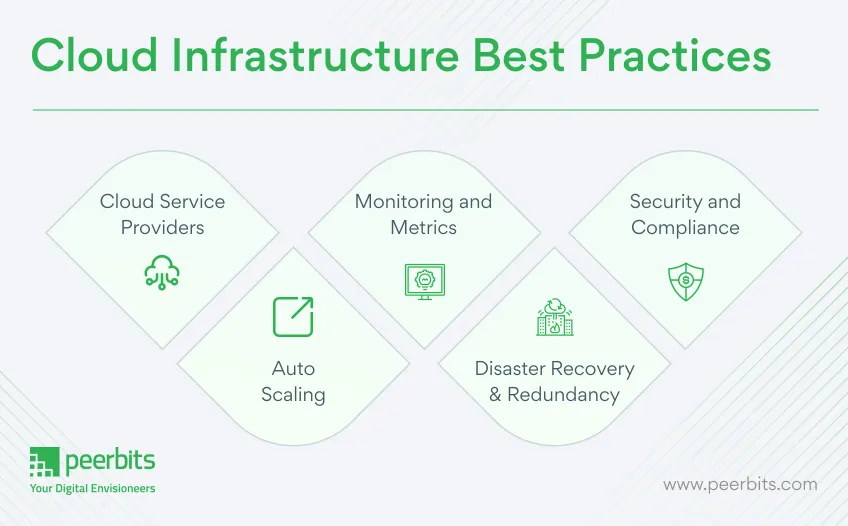

Best practices for reliable and scalable cloud infrastructure

Cloud infrastructure best practices involve optimizing resource allocation for cost-efficiency and implementing robust security measures to protect data. It also includes proactive monitoring and automation to ensure high availability and scalability while minimizing downtime and manual intervention.

Cloud service providers

- AWS, Azure, Google Cloud, and others

The cloud landscape is a vibrant ecosystem with numerous providers. Amazon Web Services (AWS), Microsoft Azure, Google Cloud, and others offer a wide array of services to accommodate diverse business needs. The choice of provider should align with your specific requirements, such as pricing, available services, and data center locations.

- Vendor selection considerations

When selecting a cloud services & solutions provider, consider factors like cost, reliability, data center locations, support, and the vendor's ecosystem of services. Evaluate your business's long-term goals and select a provider that can grow with you. It's essential to assess the Total Cost of Ownership (TCO) and Service Level Agreements (SLAs) to make an informed choice.

Auto-scaling

- What is auto-scaling?

Auto-scaling is a vital feature of cloud infrastructure that allows your applications to automatically adjust resources based on workload. This ensures that you're not overpaying for idle resources or struggling with insufficient capacity during traffic spikes. Auto-scaling enhances system performance, cost-efficiency, and user experience.

- Configuring auto-scaling rules

To implement auto-scaling effectively, define clear rules and triggers for resource scaling. Monitor metrics such as CPU utilization, network traffic, or application response times, and set thresholds for scaling up or down. Implementing these rules requires a balance between maintaining performance and optimizing costs.

Monitoring and metrics

- Importance of real-time monitoring

Real-time monitoring is the backbone of cloud infrastructure management. It enables you to gain insights into the health and performance of your applications and services. Monitoring helps identify issues proactively, optimize resource usage, and ensure a smooth user experience.

- Key metrics to watch

Key metrics to monitor include CPU utilization, memory usage, network traffic, error rates, and response times. Cloud providers offer monitoring tools and services, but you can also integrate third-party solutions for a more comprehensive view. Monitoring is essential for making data-driven decisions and ensuring your infrastructure runs efficiently.

Disaster recovery and redundancy

- Data backups and recovery strategies

Disaster recovery is a critical aspect of cloud infrastructure. Regularly back up your data and ensure that you have a well-documented recovery plan in case of data loss or system failures. Cloud providers often offer backup and recovery services, but you should also consider third-party solutions for added redundancy.

- Geographical redundancy

Geographical redundancy is a practice that involves replicating data and applications across multiple data centers or regions. This approach enhances fault tolerance and ensures business continuity, even in the face of regional disasters. Consider your geographical redundancy options, especially if your business is geographically diverse.

Security and compliance

- Security best practices in the cloud

Security in the cloud requires a multi-layered approach. Employ encryption for data at rest and in transit, implement strong access controls, regularly update and patch your systems, and conduct security audits. Security best practices are vital to protect your data and maintain customer trust.

- Compliance requirements for SaaS

Depending on your industry and location, your cloud infrastructure may be subject to specific compliance regulations, such as GDPR, HIPAA, or PCI DSS. Ensure your infrastructure aligns with these requirements, and implement the necessary controls and monitoring to demonstrate compliance.

Architectural best practices are fundamental to creating scalable, efficient, and robust systems. Microservices architecture, load balancing, containerization, caching, and statelessness are key concepts that architects and developers should master.

By embracing these practices and understanding their benefits, you can build systems that are well-prepared for the ever-changing demands of the modern technological landscape.

Read More: Checkout the latest trends for SaaS application architecture

Real-world examples of SaaS applications scaling successfully

To provide a practical perspective, we'll present real-world case studies of SaaS applications that have successfully scaled. We'll explore the challenges they faced and how they overcame them, offering valuable insights into real-world scalability.

Slack

Slack, the popular team collaboration platform, is an exemplary case of SaaS application scaling. As its user base grew rapidly, they faced the challenge of ensuring real-time communication while maintaining a seamless user experience.

Key takeaways:

Slack's success can be attributed to its microservices architecture, allowing for modular scalability. They prioritized real-time updates by using technologies like WebSockets. The adoption of multiple data centers ensured redundancy and reliability.

Salesforce

Salesforce, a pioneer in cloud-based Customer Relationship Management (CRM), serves a vast array of businesses globally. Their challenge was to maintain performance and reliability as they expanded to serve more clients.

Key Takeaways:

Salesforce embraced a multi-tenant architecture, sharing infrastructure among clients efficiently. They focused on data center and geographic redundancy for high availability. Extensive use of caching helped minimize database loads and improved response times.

Challenges faced and how they were overcome

Challenges in scaling a SaaS application often include data synchronization bottlenecks and increased latency as user numbers grow. These were overcome by implementing microservices architecture, distributing data, and utilizing auto-scaling cloud services, ensuring smooth performance and rapid response times even as the user base expanded.

Infrastructure and resource scalability

Challenges: As SaaS applications gain users, infrastructure must scale accordingly to meet demand. Ensuring that the application is performant and responsive is critical.

Solutions

- Implement auto-scaling to allocate resources dynamically.

- Utilize cloud infrastructure to provision resources as needed.

- Employ content delivery networks (CDNs) to distribute content globally and reduce latency.

Data management and security

Challenges: Safeguarding user data, ensuring compliance with data privacy regulations, and managing data growth are constant concerns.

Solutions:

- Encrypt sensitive data both in transit and at rest.

- Implement robust access controls and authentication mechanisms.

- Regularly audit and monitor data access for security and compliance.

- Maintaining a Seamless User Experience

In a digital age where SaaS applications are transforming businesses, mastering scalability is no longer an option but a necessity. With the insights and best practices offered in this blog, you'll be better equipped to create SaaS applications that can grow and adapt to meet the needs of an ever-expanding user base.

Conclusion:

Scalability has become a core requirement in SaaS application. As user demand grows, every early technical shortcut or loosely made decision can turn into a costly bottleneck.

Building a product that users love is only the first step. Keeping it fast, stable, and cost-effective as it grows is where the real challenge begins.

The right architecture and cloud infrastructure setup won’t just save you from firefighting later. It gives your team the confidence to build, release, and grow without hesitation.

If your SaaS product is gaining traction or even preparing to scale, now is the time to act. Get the foundation right, so growth doesn’t break what you’ve worked hard to build.