There are only two ways to gauge the process output – either the quantity or the quality. The later when followed is bound to drive tremendous results because after all, it all boils down to how the product is and not ‘how many’ the products are.

Understanding the underlying principles of our result-driven process, we, at Peerbits believe in delivering excellence righteously. And when we talk about something as righteousness, we certainly are indicating that the DNA of our company’s soul lies in the quality assurance.

The basic fabric of any output is measured in the experience that client has once the product is deployed.

The idea is to deliver solutions in the best yet simplified manner. At Peerbits, we not only focus on catching the bugs, or mitigating the malware risks, but also integrate a distinctive, strategized approach for assuring the quality aimed at benchmarking the quality testing standards.

Our testing strategy is based on the prioritizing client requirements and considering the pervasive nature of the technology solutions; these strategies are tailored to best fit the requirements.

Testing process

Results are driven not out of the paper plans but on what is executed in the process. And for this very reason, we follow a particular quality process for streamlining our efforts and assuring a regulated output matching the project requirements.

Planning

A testing plan indicates the expected procedure execution for rest of the process. It deals with the testing tasks, defining all the activities and individuals who are in charge of each process milestones.

The details about the inception date, completion date, along with assigned time and tasks are proposed in the planning phase.

Executing

The post-planning phase comprises of test design where cases as per the received functional specification from clients are executed to perfection.

The crucial task of test data identification along with tracing the requirements and test cases are of paramount importance in this phase.

Checking

Minding each of our moves critically, we tend to keep a third eye over our review processes before executing the planned activities affirmatively.

Act

Acting upon the predetermined planned activities are pretty essential because this is where the actual, result-oriented tasks are carried out. The primary task involves executing our cases and keeping a log of the bugs in the process.

We go our way out in resolving the bugs and retesting them to improve the output quality.

It is only upon reaching the desirable quality we hand over the project to clients and remain open to all the feedbacks. But in most of the cases, the comments always came in the form of appraisals, leaving too less room for critical feedback.

Having said that, it is our inclination and adherence to the testing process that yields satisfactory results for clients.

Understanding Bugs

Software bugs exploit the vulnerabilities of system or software as it has the similar characteristics of real-life bugs barring the visibility factor of course.

A software bug is technically an error, flaw, failure, or a fault in an application that generates an unexpected result.

Engaging our well-qualified, experienced, and passionate QA team are bound to drive best results for the projects. It is because of the expertise they possess in utilizing testing tools like Leantesting and Crashlytics that assures efficient quality control by eliminating annoying and unexpected bugs.

Classification of bugs and its impact on device and applications

Blocker bug can kill your app entirely. It does not allow users to log in, nor can a user have access to their calls and messages.

The critical bug is kind of an artificial appendage that does not function as a real bug but still you can go ahead with it.

Majorly, glitches are the results of such bugs. For example, if you are enabled to send a message in a group chat, you just cannot send it, but when you try to send this message personally, it works.

A major bug is responsible for crashing some of the app’s body parts. It actually restricts certain user activities like disabling setting changes, nullifying your attempts to alter volumes or failures in uploading images.

Minor bug represents a negligible breakage which can be ignored unless you are a perfectionist. UI-related problems like dysfunctioning of third entry field can occur with minor bugs.

Trivial bug is pretty embarrassing for the testing experts, and one can only hope to get it past unnoticed. Though it originates from a third party service or a library, it holds no upshot on the overall quality of the product.

Something like typo apart from those in the name of the app or on the main pages may occur due to the Trivial bug.

Handing over the app to customer (From Demo 1 to βDemo)

Clients are driven by the satisfaction the vendor offers, and once they have got delivered with something they something they expected, business strikes a harmonious relation.

Quality is one thing that results in positive word of mouth where clients go on and refer the vendors to all their connections about their good experience.

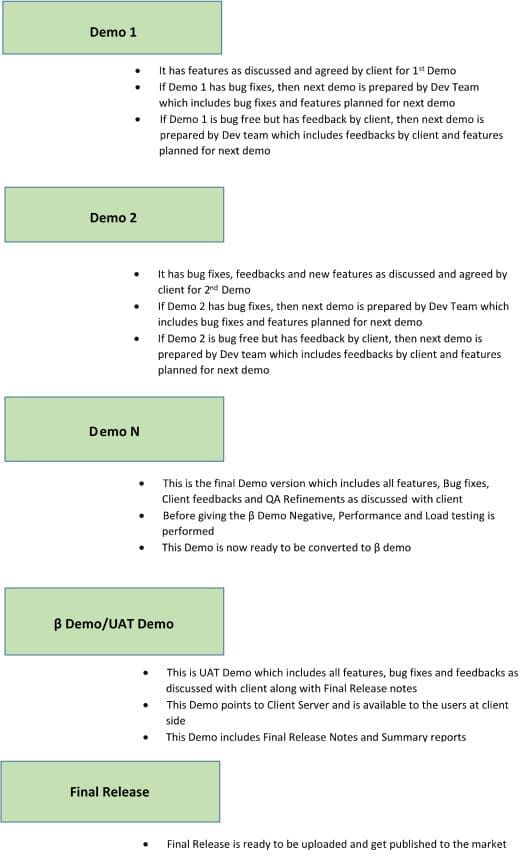

Delivery Process

We have a streamlined procedure for delivering the mobile application to clients. The below-mentioned demo phases are as per the defined miletsone. The image below highlights the delivery process of the application at Peerbits.

Types of Testing

Each set of the demo is carried out by utilizing the best suitable testing methodology that goes along with the application requirement. Each demos are conducted with a particular set testing that is performed before delivering an app to client.

New feature testing

New feature testing is a thorough functionality check to ensure that new features are implemented, and current build is working correctly.

Sanity testing

Sanity testing is used to revive the features that were initially implemented in an earlier stage of the demo. Sanity testing aims to balance the functionality and requirement equilibrium.

Smoke testing

The term “smoke testing” incepted from an engineering slang meaning powering on a device safeguarding to refrain it from “smoking.”

In our case, smoke testing reflects a quick verification of all the basic functionalities performed before the complex regression testing. For example, if a tester is unable to log in, there is no point in checking how other built-in features work together until this major bug is fixed.

Regression testing

When a large chunk of functionality has been implemented and thoroughly tested, QA engineers perform regression testing so as to be certain that all the components of an app are working together without causing new bugs to appear.

There can be a rare exception to there can be iterations during which only a few major features are added. For example, implanting in-app chat feature may take a considerable amount of time and require close attention to its sub-features. In this case, we ideally put down the regression testing until the next demo.

Non-functional tests

Testers also perform non-functional tests that don’t touch upon an app’s specific features. We carry out the following non-functional tests:

- Installation testing has the sole purpose of checking installation and uninstallation process by ensuring that the same is done without issues.

- Compatibility testing examines how an app performs over a multitude of operating systems versions and on distinctive devices.

- Usability testing deals with application performance, UI/UX perceptiveness, and a holistic app usability.

- Condition testing checks an app’s performance when the device is running on low battery charge or in the absence of internet connection.

- Compliance checking ensures that apps comply with Google and Apple guidelines.

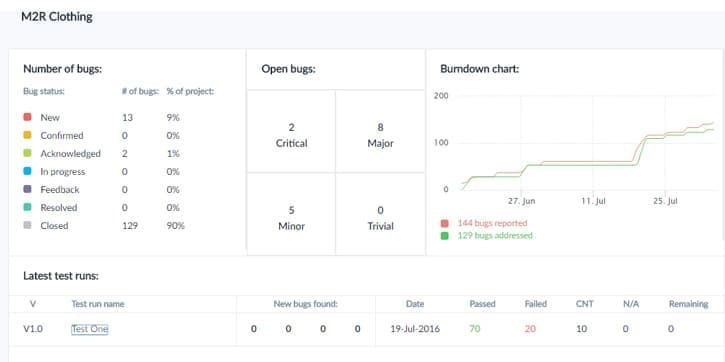

Submitting bugs

All bugs found during the testing process from crash report tool are provided to the corresponding project in Bug reporting tool/Excel. Thus, developers have a full list of issues to fix before the next Demo starts.

Here are the tools that come handy in identifying the bug along with its source. We typically go with the Leantesting to check the bugs during this process to identify bugs precisely.

What to expect from testing

People generally believe that the goal of the testing procedures is to prove the absence of bugs. But that is not the case at Peerbits as we consider testing as a critical tool required to find the perilous bugs and enable applications to meet the business requirements.

It is almost impossible to attain 100% bug-free app status but certainly it is possible to achieve 100% of operational and business requirements. It is here where Peerbits creates a difference in offering highly satisfactory mobile app experience to businesses and users alike.

To meet the business requirements by ensuring a simple, almost bug-free mobile app, we perform Beta testing (pre-release testing). To ensure the application is meeting the business requirements and no bug will harm the company image, we would advise performing beta testing (pre- release testing).

During beta testing, the application is handed to the client/users outside the team, asking them to discover any flaws or issues in using the app. This testing is entirely performed from the potential user’s perspective to address the issues before the final market release.

Quality analysis is certainly an important task regarding delivering the application is concerned. The reason being, application’s utility entirely depends on the way users operate it.

And unless the function of QA is performed, there is no way to gauge an app’s reliability.

Safeguarding a mobile application is pretty important to enhance vendor’s brand image and ensure security and elated experience to the end-users.

Conclusion

Quality Assurance is to check whether the product created is of the best quality and is fit for use or not. At peerbits, we follow every standard and our testing strategies are based on prioritizing clients’ needs.

We also have a streamlined procedure for delivering the mobile application to clients. Quality analysis is an essential task for every organization to gain customer satisfaction and attract potential clients.